Hi. I need to be able to extract millions of rows from my database on a daily basis to a UNIX ftp server. Will Toad Intelligence Central be able to do this?

Toad Intelligence Central has one type data object - snapshot, it will run on your defined schedule to retrieve the data from remote data sources(RDBMS/BI/NoSQL) and store the data in local(MySQL), user can view and download the data in TIC web console.

If you want to export the data to the share Unix folder automatically, maybe you also need Toad Data Point, its automation script can meet you request - use the export wizard to query remote data and export the result set as a file (you can export the result set to a network share folder directly , or export it to a local file first then add one FTP activity to upload the file, TDP can do all these for you).

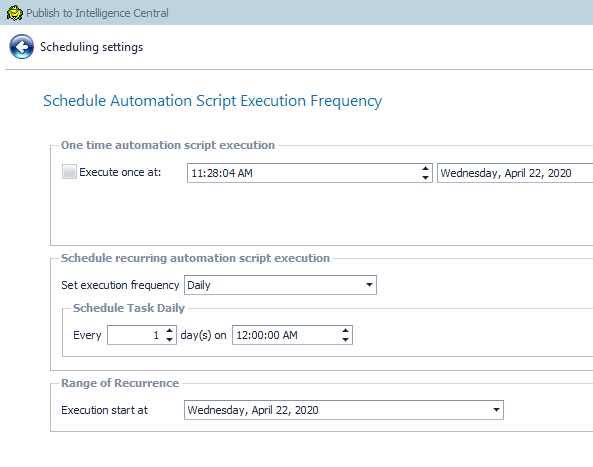

You create a TDP automation script and publish to TIC with your defined schedule.

For you concern - millions records data volume, I did a smoke test in my local env, using TDP export wizard - query a table with 3 millions records and export as a csv file, it works fine, the performance depend on the remote DB/Toad host's hardware and the network bandwidth .

1)Use TDP to export a query result to share folder

2)Then publish this automation script to TIC, you can define the schedule when publishing or change it later in TDP or TIC web soncole